OpenAI WebSocket Mode for Responses API Review: Unlocking Persistent Speed for AI Agents

Persistent AI agents. Up to 40% faster.

Published: 3/1/2026

Product Overview

The OpenAI WebSocket Mode for Responses API is a significant enhancement targeting developers building sophisticated, multi-turn AI applications. At its core, this feature introduces a persistent connection layer to the standard Responses API workflow. Traditionally, in multi-step agent interactions—especially those involving complex tool-calling—each turn requires resending the entire context history. This repetitive data transfer creates substantial overhead, which directly translates to increased latency and slower response times for the end-user. The WebSocket Mode directly addresses this bottleneck by establishing a continuous, stateful channel between the client and the OpenAI service. This allows the system to transmit only the incremental input for the current turn, rather than the full conversational history, promising substantial performance gains.

This product is squarely aimed at the AI developer community, particularly those designing advanced, stateful AI agents, sophisticated conversational UIs, or intricate workflows that rely heavily on sequential tool execution. The core value proposition is clear: achieving faster, more efficient, and scalable AI interactions without sacrificing the richness of persistent context. For any application where latency is a critical factor—such as real-time customer service bots, complex coding assistants, or dynamic game NPCs—the WebSocket Mode stands out as a must-try infrastructural upgrade.

Problem & Solution: Slaying the Context Overhead Dragon

The fundamental problem this innovation solves is the compounding overhead of context transmission in sequential AI workflows. As an agent works through a complex task involving multiple steps, generating function calls, and receiving new user input, the payload sent with each new request grows linearly. In heavy tool-calling workflows, where the system might alternate between user input, system instructions, and tool outputs, this repeated transmission of the entire history dramatically inflates end-to-end latency. This friction degrades the user experience, making the AI feel sluggish and unresponsive during deep engagement.

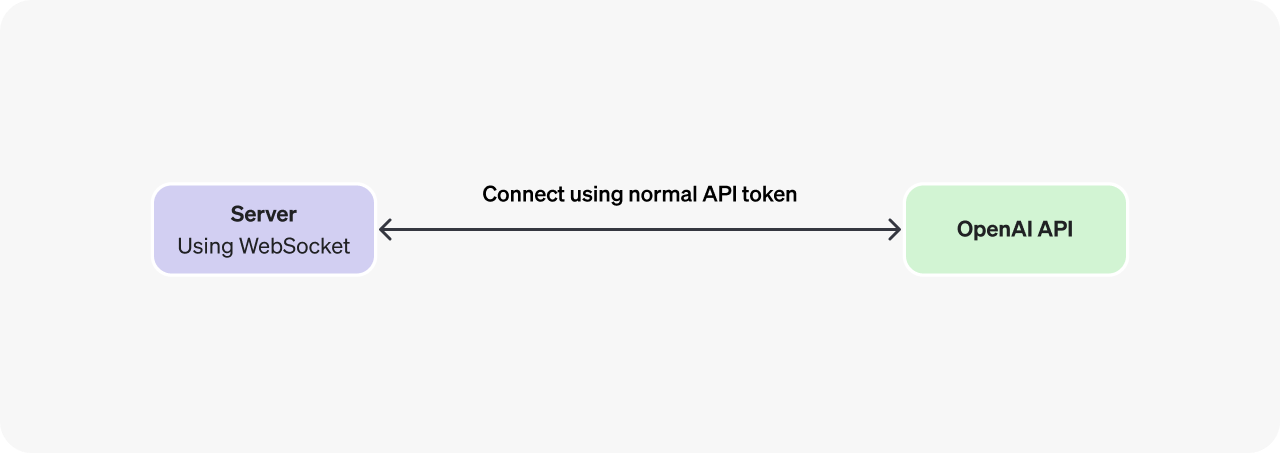

The OpenAI WebSocket Mode for Responses API solves this by leveraging the persistent, bi-directional nature of WebSockets. Instead of tearing down and re-establishing the connection and payload for every interaction, it maintains an open line. This allows the system to send only the newest piece of information—the latest user message or the incremental result from a tool—while the server retains the necessary state from the previous turns. The result is a direct mitigation of the context bloat issue, translating into documented latency reductions of up to 40% on heavy tool-call workflows. This shift moves the paradigm from stateless, repetitive transactions to a more efficient, stateful communication model optimized for agentic behavior.

Key Features & Highlights

The standout feature of this release is the persistent connection architecture itself, which forms the foundation for all performance gains. By shifting from traditional request/response to a WebSocket-based communication channel, the API achieves true incremental input handling.

The most compelling highlight is the significant latency reduction, advertised as up to 40% improvement for complex agentic use cases. For developers building latency-sensitive applications, this is a game-changer that can directly impact user satisfaction and adoption. This efficiency gain is crucial not only for user perception but also for operational costs, as reduced transmission overhead can lead to lower token usage for the same amount of interaction complexity.

While specific implementation details aren't provided, the implied user experience benefit centers on smoother, more fluid multi-turn interactions. Developers can now design deeper, more complex agentic flows with greater confidence that the underlying infrastructure won't become a performance bottleneck. This feature signals OpenAI's commitment to optimizing the infrastructure layer specifically for the emerging class of complex, stateful AI agents.

Potential Drawbacks & Areas for Improvement

As a newly introduced infrastructural mode, potential users should be aware of inherent trade-offs associated with WebSockets. A primary consideration will be connection management and reliability. Developers will need to implement robust handling for connection drops, timeouts, and reconnections, which adds a layer of complexity not present in the simpler, stateless HTTP model. While the performance gain is clear, the engineering overhead for maintaining this persistent state needs to be factored into development time.

Furthermore, the feature seems highly specialized for tool-calling and heavy sequential workflows. For simple, single-turn queries or basic chat applications, the performance uplift might be negligible, while the added complexity of managing a WebSocket connection might not be justified. An area for future improvement could be a more granular API that intelligently switches between HTTP and WebSocket based on the perceived complexity of the current turn, providing developers with an abstraction layer to manage the connection logic seamlessly. Clearer documentation on best practices for state synchronization and error handling in the WebSocket context will be crucial for widespread adoption.

Bottom Line & Recommendation

The OpenAI WebSocket Mode for Responses API is a powerful, necessary evolution for the modern AI stack. It is a must-try for any developer currently experiencing painful latency spikes in their conversational AI or agentic workflows that rely on repeated context transmission or extensive tool usage. If your application hinges on delivering a snappy, real-time experience across multiple interaction steps, this feature offers a clear, measurable performance advantage by intelligently tackling context overhead. For those just starting out or running simple, infrequent API calls, the standard HTTP endpoint remains the path of least resistance. Overall, this is a significant infrastructural leap that solidifies the platform's capabilities for building the next generation of sophisticated, persistent AI agents.

Featured AI Applications

Discover powerful tools to enhance your productivity

MindMax

New Way to Interact with AI

Beyond AI chat, transforming conversations into an infinite canvas. Combining brainstorming, mind mapping, critical and creative thinking tools to help you visualize ideas, solve problems efficiently, and accelerate learning.

AI Slides

AI Slides with Markdown

Revolutionary slide creation fusing AI intelligence with Markdown flexibility - edit anywhere, optimize anytime, iterate easily. Turn every idea into a professional presentation instantly.

AI Markdown Editor

Write Immediately

Extremely efficient writing experience: AI assistant, slash commands, minimalist interface. Open and write, easy writing. ✍️ Markdown simplicity + 🤖 AI power + ⚡ Slash commands = Perfect writing experience.

Chrome AI Extension

AI Assistant Anywhere

Transform your browsing experience with FunBlocks AI Assistant. Your intelligent companion supporting AI-driven reading, writing, brainstorming, and critical thinking across the web.