PinchBench: The Definitive Evaluation Tool for OpenClaw AI Agents

Find the best AI model for your OpenClaw

Published: 3/26/2026

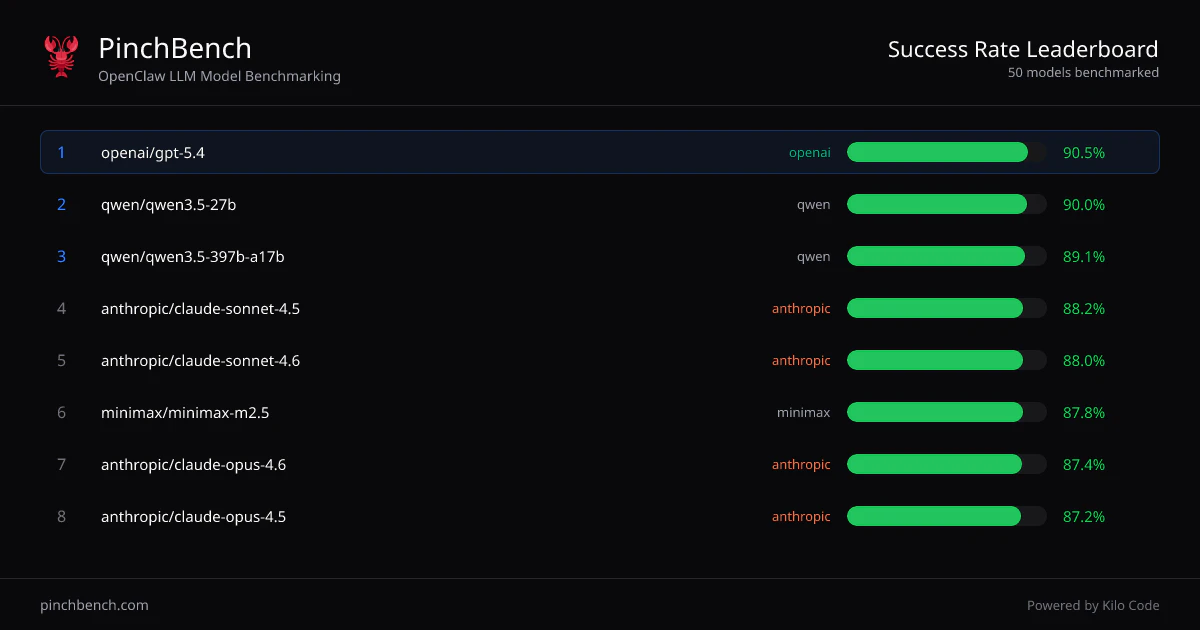

In the rapidly evolving landscape of AI-driven development, choosing the right Large Language Model (LLM) for specific coding tasks has become a game of guesswork. Enter PinchBench, a specialized benchmarking system designed specifically for developers using OpenClaw coding agents. Developed by the team at Kilo Code, PinchBench cuts through the marketing noise of model performance by stress-testing LLMs against real-world coding challenges, providing data-backed clarity for your technical stack.

PinchBench is essentially a high-fidelity sandbox where various LLMs are tasked with identical, complex coding workflows. By measuring success rates, inference speed, and token costs, it provides a comprehensive dashboard that helps developers optimize their agentic workflows. Whether you are building complex automation scripts or large-scale applications, PinchBench ensures that your model choice is dictated by performance metrics rather than hype.

Addressing the "Black Box" Problem

For developers integrating AI into their development environment, the primary challenge is unpredictability. Different models—ranging from GPT-4o and Claude 3.5 Sonnet to smaller, specialized open-source models—behave differently when handling the nuanced, state-aware tasks required by OpenClaw agents. Until now, choosing a model was often a matter of trial and error, leading to wasted time and unnecessary API costs.

PinchBench solves this by providing a standardized "stress test" environment. Instead of relying on generic benchmarks like MMLU or HumanEval, which don't always reflect agentic coding behavior, PinchBench simulates the exact environment of an OpenClaw agent. This creates a critical market gap solution: it allows teams to benchmark model performance on the specific syntax, context window requirements, and logical constraints that their own projects demand.

Key Features and Highlights

The core strength of PinchBench lies in its granular approach to evaluation. Rather than just tracking "success or failure," the platform offers a multifaceted breakdown of model capability. Notable features include:

- Real-World Task Simulation: Benchmarks are based on authentic coding workflows rather than synthetic puzzles, ensuring results are highly relevant to production work.

- Cost-Benefit Analysis: By tracking the total cost per successful task, PinchBench allows teams to identify the "sweet spot" models that offer the best performance-to-price ratio.

- Performance Metrics: Detailed tracking of latency and inference speed, which is crucial for developers who need their coding agents to be responsive in a real-time IDE context.

- Comparative Leaderboards: An intuitive interface that allows you to stack different LLMs against one another, making it easy to spot which model excels at specific logic-heavy or refactoring-heavy tasks.

Areas for Improvement and Considerations

While PinchBench is a powerful addition to the dev-tool ecosystem, it is currently in its early stages. To provide even greater utility, it would be beneficial to see support for custom, user-defined benchmarks. Currently, the platform uses a curated set of tasks, but allowing developers to input their own internal codebase challenges would make PinchBench an indispensable part of a private enterprise workflow.

Additionally, as the landscape of "Small Language Models" (SLMs) continues to grow, integrating more local model testing (via Ollama or similar frameworks) would allow developers to explore self-hosted solutions within the same benchmarking environment. Expanding the reporting tools to include a "Project Fit" score—which automatically suggests a model based on the user's budget and latency constraints—would also save developers significant time.

Bottom Line and Recommendation

PinchBench is an essential utility for any developer or engineering lead currently utilizing OpenClaw or exploring agentic workflows in their development process. By removing the guesswork from LLM selection, it allows teams to focus on building rather than debugging their infrastructure. If you are tired of spending hours testing different models for your AI agents only to find that the "smartest" one is too slow or too expensive, PinchBench is the solution you need. It is a highly recommended tool for those looking to standardize and optimize their AI-augmented coding stack.

Featured AI Applications

Discover powerful tools to enhance your productivity

MindMax

New Way to Interact with AI

Beyond AI chat, transforming conversations into an infinite canvas. Combining brainstorming, mind mapping, critical and creative thinking tools to help you visualize ideas, solve problems efficiently, and accelerate learning.

AI Slides

AI Slides with Markdown

Revolutionary slide creation fusing AI intelligence with Markdown flexibility - edit anywhere, optimize anytime, iterate easily. Turn every idea into a professional presentation instantly.

AI Markdown Editor

Write Immediately

Extremely efficient writing experience: AI assistant, slash commands, minimalist interface. Open and write, easy writing. ✍️ Markdown simplicity + 🤖 AI power + ⚡ Slash commands = Perfect writing experience.

Chrome AI Extension

AI Assistant Anywhere

Transform your browsing experience with FunBlocks AI Assistant. Your intelligent companion supporting AI-driven reading, writing, brainstorming, and critical thinking across the web.