Qwen3.5 Small: Unpacking the Power of Tiny, Intelligent Multimodal AI

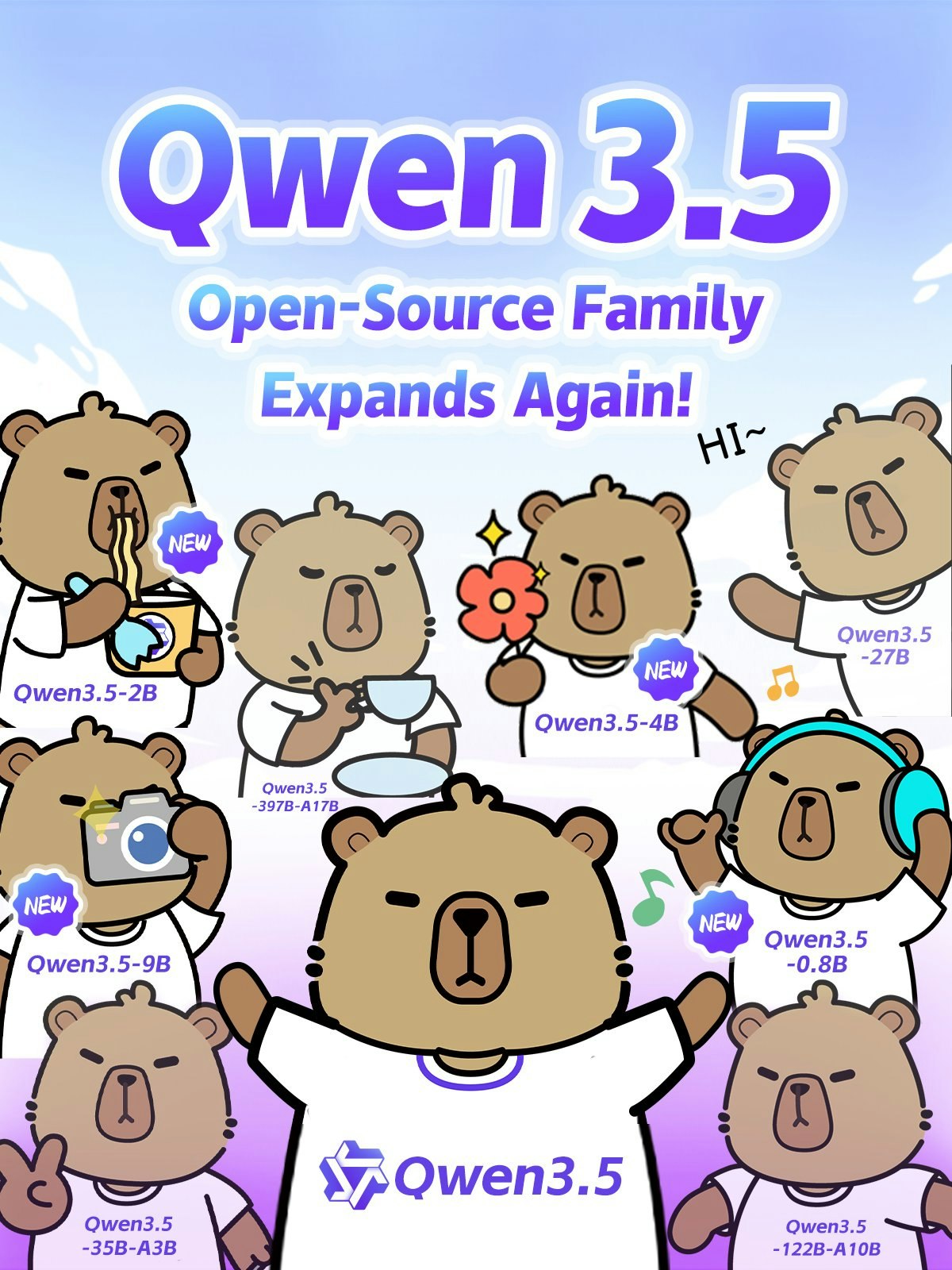

0.8B-9B native multimodal w/ more intelligence, less compute

Published: 3/3/2026

The world of Artificial Intelligence often seems dominated by behemoths—models requiring immense computational power. However, the launch of Qwen3.5 Small is a clear signal that efficiency and accessibility are the next frontier. This new series, featuring models ranging from 0.8B to 9B parameters, is making significant waves by delivering impressive intelligence with a dramatically smaller resource footprint. For developers, mobile application creators, and anyone seeking edge AI solutions, Qwen3.5 Small looks like a game-changer.

Product Overview: Intelligence Meets Efficiency

Qwen3.5 Small represents a significant step forward from its predecessors, focusing on optimizing the balance between model size and performance. The entire series—comprising 0.8B, 2B, 4B, and 9B versions—is engineered with native multimodal capabilities right out of the box, meaning it can seamlessly process different data types like text and images without relying on complex external integrations. This design choice inherently reduces latency and simplifies deployment architecture. The core value proposition is clear: more intelligence, less compute. The makers have clearly aimed this release at democratizing access to powerful AI tools, moving intelligence closer to the end-user device.

The target audience for the Qwen3.5 Small series is broad, spanning from researchers needing fast iteration cycles to businesses deploying on resource-constrained hardware. The 0.8B and 2B variants are specifically highlighted as being "tiny and fast" enough for edge devices, opening up possibilities for real-time, on-device processing in IoT, mobile apps, and specialized hardware. Meanwhile, the 9B model is positioned as a remarkably capable lightweight foundation, already challenging the performance benchmarks of much larger models, making it ideal for building sophisticated lightweight agents.

Problem & Solution: Bridging the Compute Gap

The primary problem Qwen3.5 Small seeks to solve is the growing divide between the computational cost of state-of-the-art AI and the practical realities of deployment. Large language models (LLMs) often necessitate expensive cloud infrastructure, leading to higher operational costs and inherent latency due to data transmission. Furthermore, many real-world applications demand instant responses that cloud-only solutions simply cannot guarantee.

Qwen3.5 Small tackles this by utilizing an "improved architecture and scaled RL" (Reinforcement Learning). This focus on architectural refinement allows the models to extract superior performance from fewer parameters. This is not merely a smaller model; it’s a smarter small model. By offering powerful multimodal processing natively within these constrained sizes, it fills a critical market gap for deployable, high-performance AI that doesn't bleed the budget or wait for server responses.

Key Features & Highlights: Native Multimodality and Scalability

The most compelling aspect of the Qwen3.5 Small series is its commitment to native multimodal processing across all sizes. This capability is crucial for modern applications requiring contextual understanding beyond just text.

Key highlights include:

- Optimized Size Tiers: Offering four distinct sizes (0.8B, 2B, 4B, 9B) allows developers to select the perfect trade-off between speed, memory footprint, and intelligence level for their specific use case.

- Edge Readiness: The 0.8B and 2B models are explicitly engineered for edge computing, making them perfect for offline functionality and ultra-low latency scenarios.

- Agent Foundations: The 4B model is presented as a "strong lightweight agent base," suggesting robust reasoning capabilities suitable for complex task automation in smaller packages.

- Performance Efficiency: The 9B model’s ability to "close the gap with much larger models" is a strong technical achievement, promising high-quality output without the overhead of multi-billion parameter models.

- Base Versions Available: The release of base versions provides maximum flexibility for fine-tuning and custom domain adaptation by developers.

Potential Drawbacks & Areas for Improvement

While the focus on efficiency is laudable, there are inherent trade-offs with smaller models. While the 9B model nears larger counterparts, it will inevitably have lower ultimate reasoning depth or knowledge retention compared to models in the tens or hundreds of billions of parameters. Developers using the 0.8B or 2B versions must be keenly aware of the ceilings on complex reasoning tasks.

For future iterations, some constructive suggestions would be:

- Detailed Benchmark Comparisons: Providing clear, apples-to-apples comparisons against contemporary models of similar sizes (e.g., the specific improvements in the 4B agent benchmark) would strengthen adoption confidence.

- Expanded Multimodal Modalities: While native multimodal is great, clarifying the exact modalities supported (beyond implied image/text) and roadmap for others (e.g., audio, video) would be beneficial.

- Deployment Tools: Offering specialized, optimized inference engines or one-click deployment templates specifically tailored for common edge platforms (like mobile NPU integration) would lower the barrier to entry even further for the tiny models.

Bottom Line & Recommendation

Qwen3.5 Small is an essential release for the current AI landscape. If your project requires fast, efficient AI processing, needs native multimodal support, or must run locally on edge devices or constrained servers, you absolutely need to evaluate this series. For mobile developers building the next generation of smart apps or for enterprise architects focused on cost-effective scaling, Qwen3.5 Small offers a compelling, high-performance foundation. It successfully redefines what is possible in the compact LLM space. Highly recommended for testing and deployment in resource-conscious environments.

Featured AI Applications

Discover powerful tools to enhance your productivity

MindMax

New Way to Interact with AI

Beyond AI chat, transforming conversations into an infinite canvas. Combining brainstorming, mind mapping, critical and creative thinking tools to help you visualize ideas, solve problems efficiently, and accelerate learning.

AI Slides

AI Slides with Markdown

Revolutionary slide creation fusing AI intelligence with Markdown flexibility - edit anywhere, optimize anytime, iterate easily. Turn every idea into a professional presentation instantly.

AI Markdown Editor

Write Immediately

Extremely efficient writing experience: AI assistant, slash commands, minimalist interface. Open and write, easy writing. ✍️ Markdown simplicity + 🤖 AI power + ⚡ Slash commands = Perfect writing experience.

Chrome AI Extension

AI Assistant Anywhere

Transform your browsing experience with FunBlocks AI Assistant. Your intelligent companion supporting AI-driven reading, writing, brainstorming, and critical thinking across the web.