traceAI: The Missing Link for LLM Observability in OTel-Native Stacks

Open-source LLM tracing that speaks GenAI, not HTTP.

Published: 4/1/2026

Product Overview

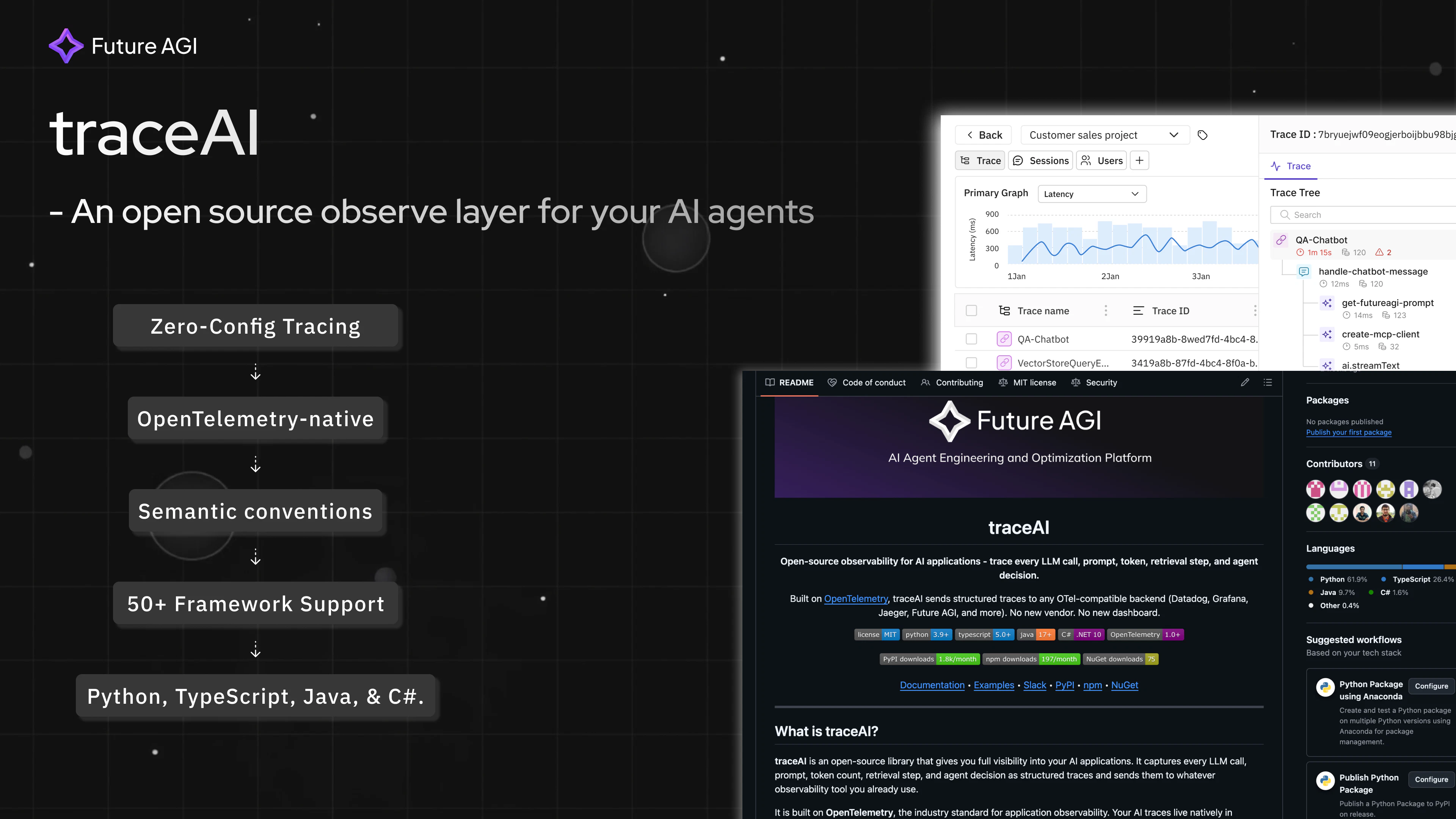

In the rapidly evolving landscape of Generative AI, developers are often forced to choose between specialized, proprietary "AI observability" platforms or blind spots in their existing monitoring infrastructure. traceAI disrupts this dilemma by offering an open-source, OpenTelemetry-native (OTel) tracing solution designed specifically for the unique language of LLMs. Unlike generic HTTP tracers that treat prompts and completions as opaque request bodies, traceAI understands the semantics of Generative AI.

Targeted at software engineers and ML practitioners, traceAI provides a streamlined way to track prompts, completions, token usage, retrievals, and complex agent reasoning chains. Because it is OTel-native, it integrates directly into the observability stacks that DevOps teams already rely on—whether that is Datadog, Grafana, Jaeger, or any other OTel-compliant backend. By avoiding the "walled garden" approach of many AI startup tools, traceAI positions itself as the infrastructure layer for developers who prioritize data portability and architectural consistency.

Problem & Solution

The current market for LLM observability is fragmented. Most tools require developers to pipe their sensitive prompt data into a third-party vendor’s dashboard, raising concerns about data privacy, egress costs, and the overhead of managing yet another SaaS subscription. Furthermore, standard HTTP tracing often fails to capture the "internal life" of an AI agent, such as vector database retrievals or multi-step reasoning processes.

traceAI solves this by bringing LLM-specific telemetry to where your data already lives. By adhering to OTel semantic conventions, it translates the chaotic nature of LLM interactions into structured, searchable trace data. It bridges the gap between traditional software monitoring and AI application performance, ensuring that GenAI isn't a "black box" in your production environment.

Key Features & Highlights

The strength of traceAI lies in its developer-first experience and broad compatibility. With just two lines of code, teams can instrument entire applications, making adoption nearly frictionless.

- Deep GenAI Semantic Awareness: traceAI captures the nuances that matter most, including individual prompts, LLM responses, token counts, and retrieval context, rather than just basic request-response metrics.

- Unified Backend Support: Because it routes data to any OTel backend, your LLM traces appear alongside your standard application logs and metrics, providing a single pane of glass for troubleshooting.

- Framework-Agnostic Ecosystem: It supports over 35 frameworks, including powerhouses like OpenAI, Anthropic, LangChain, CrewAI, and DSPy, ensuring compatibility regardless of your tech stack.

- Multi-Language Parity: Whether your team is building in Python, TypeScript, Java, or C#, traceAI offers full feature parity, preventing "siloed" instrumentation across polyglot microservices.

- Open Source (MIT): By maintaining an MIT license, traceAI guarantees that you own your instrumentation layer, eliminating vendor lock-in and allowing for internal audits of your observability code.

Potential Drawbacks & Areas for Improvement

While traceAI excels at integration, it is an infrastructure-focused tool. Users who are looking for high-level "prompt engineering" interfaces or automatic A/B testing dashboards might find it too technical compared to managed AI observability platforms. Because it plugs into your existing stack, the quality of the insights is heavily dependent on how well your team configures their existing dashboard (e.g., Datadog or Grafana) to visualize the data traceAI sends.

Furthermore, as the project grows, expanding support for more niche, localized LLM runtimes or emerging open-source model frameworks (such as Ollama or vLLM specific hooks) will be critical. Documentation for advanced custom instrumentation could also be bolstered to help teams map their complex, non-linear agent workflows more effectively.

Bottom Line & Recommendation

traceAI is a must-have for engineering teams that are serious about productionizing GenAI applications without compromising their existing observability architecture. If you are already using OpenTelemetry and want a vendor-neutral, privacy-conscious way to monitor your LLM pipelines, this is the gold standard. It is particularly recommended for developers who value ownership and want to avoid the "dashboard fatigue" of onboarding yet another SaaS tool. For teams ready to take control of their LLM data, traceAI is an incredibly high-value, low-friction addition to the tech stack.

Featured AI Applications

Discover powerful tools to enhance your productivity

MindMax

New Way to Interact with AI

Beyond AI chat, transforming conversations into an infinite canvas. Combining brainstorming, mind mapping, critical and creative thinking tools to help you visualize ideas, solve problems efficiently, and accelerate learning.

AI Slides

AI Slides with Markdown

Revolutionary slide creation fusing AI intelligence with Markdown flexibility - edit anywhere, optimize anytime, iterate easily. Turn every idea into a professional presentation instantly.

AI Markdown Editor

Write Immediately

Extremely efficient writing experience: AI assistant, slash commands, minimalist interface. Open and write, easy writing. ✍️ Markdown simplicity + 🤖 AI power + ⚡ Slash commands = Perfect writing experience.

Chrome AI Extension

AI Assistant Anywhere

Transform your browsing experience with FunBlocks AI Assistant. Your intelligent companion supporting AI-driven reading, writing, brainstorming, and critical thinking across the web.