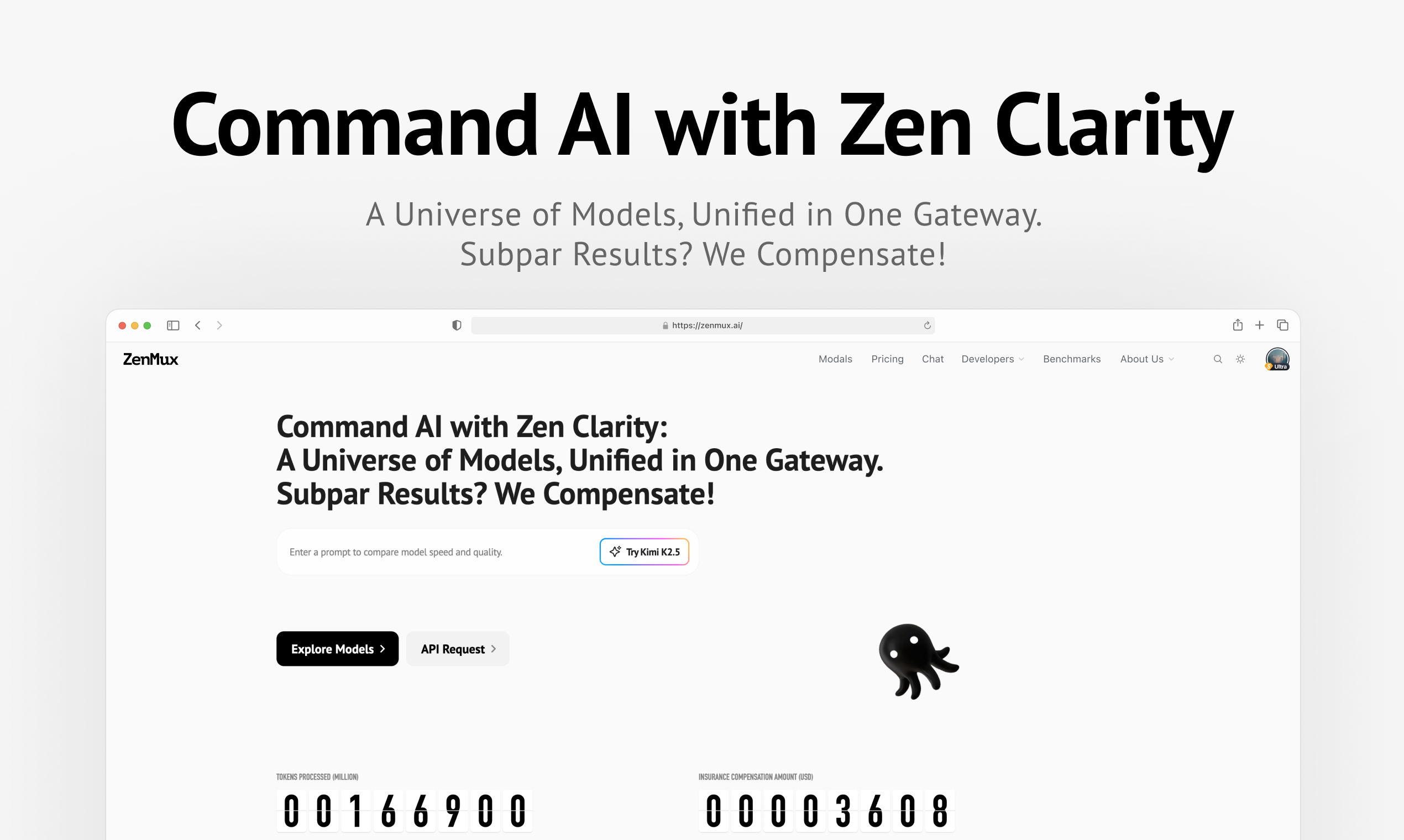

ZenMux Review: Achieving Enterprise-Grade AI Reliability with Automatic Compensation

An enterprise-grade LLM gateway with automatic compensation

Published: 2/13/2026

Product Overview

ZenMux enters the increasingly complex world of Large Language Model (LLM) deployment with a powerful value proposition: simplicity meets assurance. Pitched as an "enterprise-grade LLM gateway," ZenMux acts as the central nervous system connecting your application infrastructure to various foundational models (like GPT, Claude, etc.). It abstracts away the complexity of managing multiple providers, endpoint variations, and deployment strategies behind a single, unified API. This gateway architecture is crucial for modern development teams looking to remain agile while maintaining production stability.

The core audience for ZenMux is clearly DevOps teams, AI engineers, and CTOs within established or scaling businesses that rely heavily on generative AI services. Its utility shines brightest in scenarios where high uptime, cost optimization, and vendor lock-in mitigation are paramount concerns. By consolidating model access, ZenMux aims to transform chaotic, multi-provider LLM integration into a streamlined, manageable service layer.

The product’s central pillar—its "automatic compensation mechanism"—signals a significant shift in how reliability is managed in the LLM space. This feature is designed to ensure service continuity even when external model providers experience outages or performance degradation, making ZenMux more than just a simple proxy; it’s an intelligent traffic manager focused on delivering consistent user experiences.

Problem & Solution

The modern enterprise faces a significant pain point when adopting LLMs: model fragility and vendor dependency. A single outage from OpenAI, Anthropic, or any other provider can halt core business functions built on these APIs. Furthermore, the effort required to integrate, monitor, and optimize costs across multiple provider-specific APIs introduces significant technical debt and slows down development velocity.

ZenMux solves this by acting as an intelligent abstraction layer. It abstracts provider specifics so developers only need to maintain one integration point. The solution’s differentiation lies squarely in its proactive response to failure. Instead of simply failing over to a secondary provider (which often requires manual configuration or slow reaction times), ZenMux promises automatic compensation. This suggests an intelligent fallback or throttling mechanism that maintains service levels, effectively shielding the end-user from underlying infrastructure volatility common in the fast-moving AI ecosystem. This approach fills a critical market gap for "set-it-and-forget-it" enterprise reliability for AI workloads.

Key Features & Highlights

ZenMux’s feature set is built around enterprise requirements: control, performance, and resilience. The Unified API is the immediate benefit, drastically reducing integration time and maintenance overhead when switching between or utilizing multiple state-of-the-art models. This simplifies codebase management immensely.

The Smart Routing capability likely handles dynamic traffic distribution, potentially based on latency, cost, or specific model capabilities required by the prompt. For high-throughput applications, this optimization capability is invaluable for controlling operational expenses (OpEx).

However, the standout feature is the Industry-First Automatic Compensation Mechanism. While the technical details of how this compensation works aren't fully detailed, the promise is clear: ZenMux actively works to maintain service availability when a primary LLM provider stumbles. This shifts the burden of ensuring 99.99% uptime from the development team to the gateway itself, which is a massive win for reliability engineering in AI.

User experience highlights center on developer velocity. By providing a dependable, centralized control plane for all AI traffic, ZenMux allows product teams to focus on building innovative features rather than patching third-party API failures.

Potential Drawbacks & Areas for Improvement

As a new gateway solution, the primary area for constructive scrutiny lies in the transparency and configurability of the "automatic compensation." Users will need deep insight into the logic underpinning this feature.

For example:

- Fallback Latency: How quickly does the automatic compensation mechanism kick in? If failover adds several seconds to response time, it may still degrade the perceived user experience.

- Cost Implications: Does automatic compensation favor a more expensive, high-reliability model during a failover event? Enterprises need granular cost controls to prevent unexpected budget overruns during compensation events.

- Model Drift: If the fallback model behaves differently than the primary model, how does ZenMux help developers manage prompt engineering adjustments to maintain output consistency?

To enhance ZenMux further, providing robust, built-in monitoring dashboards that visualize provider health, compensation events, and associated latency would be highly beneficial for adoption within regulated enterprise environments.

Bottom Line & Recommendation

ZenMux is a compelling offering for any organization scaling its use of generative AI where uptime and cost control are non-negotiable. If your current LLM architecture involves spaghetti code managing three different provider SDKs, or if you’ve recently suffered a performance impact due to an external model outage, ZenMux is a must-evaluate tool. It directly targets the most significant friction points in enterprise AI deployment: vendor reliance and unpredictable reliability. For teams prioritizing robust, future-proof LLM infrastructure, ZenMux offers a genuinely innovative layer of operational assurance.

Featured AI Applications

Discover powerful tools to enhance your productivity

MindMax

New Way to Interact with AI

Beyond AI chat, transforming conversations into an infinite canvas. Combining brainstorming, mind mapping, critical and creative thinking tools to help you visualize ideas, solve problems efficiently, and accelerate learning.

AI Slides

AI Slides with Markdown

Revolutionary slide creation fusing AI intelligence with Markdown flexibility - edit anywhere, optimize anytime, iterate easily. Turn every idea into a professional presentation instantly.

AI Markdown Editor

Write Immediately

Extremely efficient writing experience: AI assistant, slash commands, minimalist interface. Open and write, easy writing. ✍️ Markdown simplicity + 🤖 AI power + ⚡ Slash commands = Perfect writing experience.

Chrome AI Extension

AI Assistant Anywhere

Transform your browsing experience with FunBlocks AI Assistant. Your intelligent companion supporting AI-driven reading, writing, brainstorming, and critical thinking across the web.