Kimi K2.5 Review: Native Multimodality Meets Self-Directed Agent Swarms

Native multimodal model with self-directed agent swarms

发布时间: 1/28/2026

Product Overview: The Next Leap in Open-Source AI Intelligence

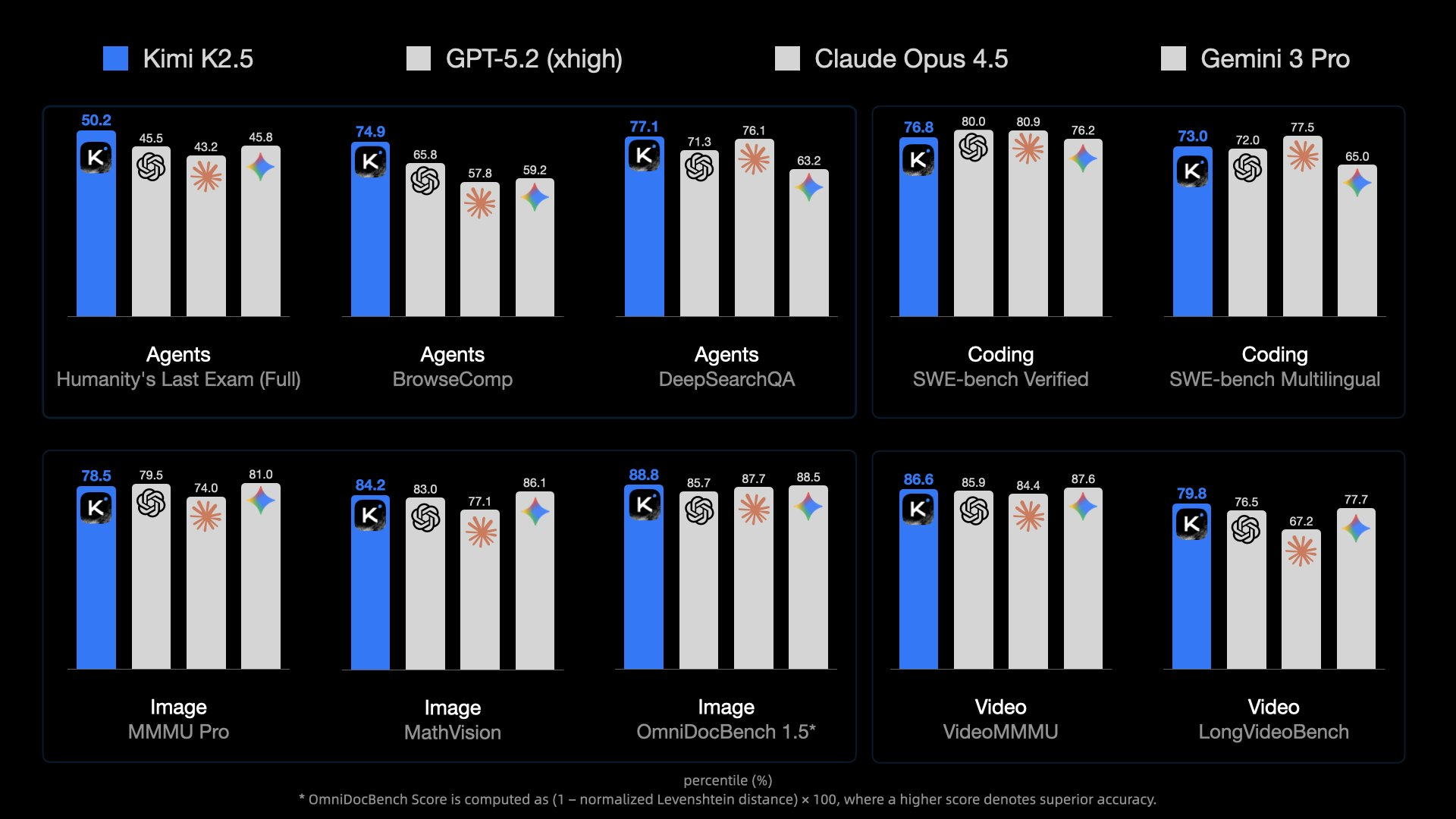

Kimi K2.5 arrives on the scene, not just as an incremental update, but as a significant step forward in accessible, high-performance Artificial Intelligence. Tagged as having "Native multimodal model with self-directed agent swarms," this launch positions Kimi K2.5 as a formidable contender in the rapidly evolving LLM landscape. This model boasts state-of-the-art (SoTA) performance across critical benchmarks, particularly in the realms of complex Agent capabilities, sophisticated code generation, and deep visual understanding.

This isn't just another text-generation tool. Kimi K2.5 is designed for power users, developers, researchers, and enterprises seeking cutting-edge intelligence that can handle diverse inputs natively. Its core value proposition lies in delivering highly versatile, top-tier intelligence that bridges the gap between traditional language models and holistic reasoning engines. If you are evaluating the leading edge of open-source large language models (LLMs), Kimi K2.5 demands your attention.

Problem & Solution: Breaking the Modality Barrier

The primary challenge facing many current-generation AI models is the forced sequential processing of different data types. A user often needs separate models or complex chaining to process an image, interpret its context, and then generate a code solution based on that visual information. This leads to integration overhead and potential fidelity loss across conversion steps.

Kimi K2.5 solves this by introducing a native multimodal architecture. This means the model processes text and visual inputs simultaneously and holistically from the ground up, leading to richer contextual understanding. Furthermore, the introduction of "self-directed agent swarms" signifies a move beyond simple prompt-response loops. Kimi K2.5 can autonomously orchestrate specialized sub-agents to tackle complex, multi-step problems, filling a crucial market gap for sophisticated, autonomous workflow execution within a single model framework.

Key Features & Highlights: Versatility and Agentic Power

The capabilities packed into Kimi K2.5 are impressive, suggesting a highly optimized and flexible system architecture.

The most notable features center around its architectural versatility:

- Native Multimodality: Seamlessly accepting and reasoning over both visual and text inputs, making it ideal for tasks like interpreting diagrams, analyzing screenshots, or generating descriptions from visual data.

- Agentic Capabilities: Its SoTA performance in Agent tasks suggests advanced planning, tool use, and complex problem decomposition capabilities, moving closer to true autonomous AI assistants.

- Dual Thinking Modes: The support for both "thinking" (deliberative, complex reasoning) and "non-thinking" (fast, direct response) modes allows users to toggle efficiency versus depth based on the immediate need of the task.

- Code Generation Excellence: High performance in code-related tasks positions Kimi K2.5 as a powerful tool for software developers looking for advanced coding assistance or debugging partners.

The user experience, driven by this powerful backend, promises seamless transitions between dialogue (conversational interaction) and demanding Agent workflows, all powered by a single, intelligent core.

Potential Drawbacks & Areas for Improvement

While Kimi K2.5 sets a high bar, like any new, cutting-edge release, there are areas ripe for further development or clearer documentation.

Given the complexity introduced by agent swarms and dual thinking modes, transparency around performance trade-offs is crucial. Users will need clear guidance on when to utilize the "thinking" versus "non-thinking" modes for optimal latency and accuracy.

Suggestions for enhancement moving forward could include:

- Tool Integration Framework: While Agentic capabilities are present, a standardized, easy-to-use framework for integrating external APIs or proprietary tools into the agent swarms would significantly boost enterprise utility.

- Benchmarking Clarity: Publicly detailing the specific context windows and computational requirements for achieving SoTA multimodal results would help potential deployers properly budget resources.

- Fine-Tuning Pathways: Clearer documentation or simplified pathways for users to fine-tune the Kimi K2.5 base model on proprietary datasets would accelerate adoption within specialized industries.

Bottom Line & Recommendation

Kimi K2.5 is a landmark release, successfully merging native multimodal comprehension with sophisticated, self-directed agent intelligence. It stands out as one of the most versatile and powerful open-source model offerings available today.

Who should try this product? Developers working on complex AI agents, researchers testing the boundaries of multimodal reasoning, and technical teams building next-generation code assistants or visual analysis tools.

Overall, Kimi K2.5 represents a significant achievement in democratizing high-end AI performance. It is highly recommended for anyone looking to push the limits of what current LLM technology can accomplish across text and vision inputs. This model sets a compelling new benchmark for the industry.

Featured AI Applications

Discover powerful tools to enhance your productivity

MindMax

与AI互动的新方式

超越 AI 聊天,将对话转化为无限画布。结合头脑风暴、思维导图、批判性与创造性思维工具,帮助你可视化想法、高效解决问题、加速学习。

AI Slides

AI 驱动幻灯片,Markdown 魔法加持

革命性幻灯片创作,融合 AI 智能与 Markdown 灵活性 - 随处编辑,随时优化,轻松迭代。让每个想法,都能快速变成专业演示。

AI Markdown Editor

打开即写 - AI驱动的Markdown编辑器

极其高效的写作体验:AI助手、斜杠命令、极简界面。打开即用,轻松写作。✍️ Markdown简洁 + 🤖 AI强大 + ⚡ 斜杠命令 = 完美写作体验

FunBlocks AI Extension

🚀 AI驱动的浏览器扩展

用FunBlocks AI助手改变您的浏览体验。您的智能伴侣,为网络上的AI驱动阅读、写作、头脑风暴和批判性思维提供支持。